Artificial intelligence systems have become an almost essential aspect of the modern world. From semi-self-driving cars to education tools and workplace assistance, demand for efficient AI systems will only increase as more programs are developed.

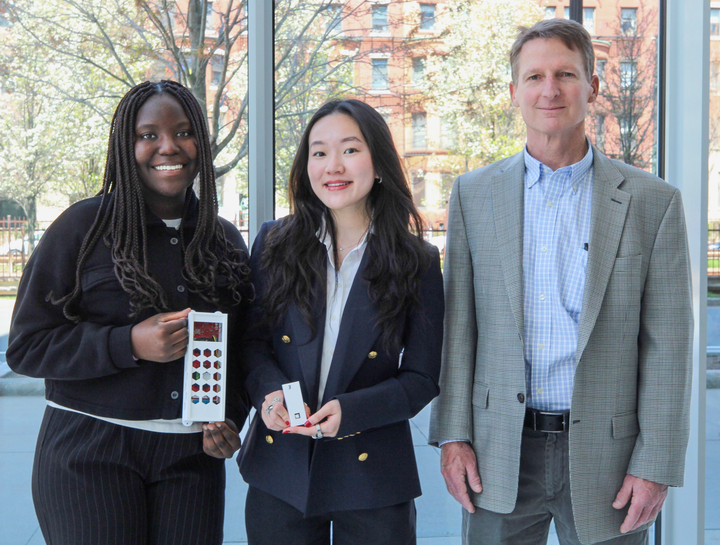

A new AI simulator called SoundSpaces was introduced Monday at a Zoom webinar, hosted by Boston University’s Rafik B. Hariri Institute for Computing and Computational Science and Engineering. The event showcased the program’s ability to have an independent “agent” guide itself to noise-producing objects through 3D environments.

The system works by placing the model in a 3D simulation of any environment, such as a house. By using audio-visual cues, the agent can navigate its way through 3D environments. The agent will hear a noise and move toward the sound’s source, using visuals and echolocation to find the source of the noise.

“We’re exploring how to achieve spatial understanding from audio-visual observations,” Speaker Kristen Grauman noted at the event. “What’s really unique here is thinking about it as something that could be a learnable path.”

Grauman is a professor of computer science at the University of Texas at Austin and is currently researching computer vision and machine learning for Facebook AI Research — FAIR — along with the SoundSpaces work. She said the research is designed to help the agent move toward audio stimuli.

“The challenge is,” Grauman said at the event, “you want an agent that can be dropped even into a new environment that isn’t yet mapped out, and still be intelligent about moving as a function of what it sees in its camera.”

Developed in part by UT Austin, SoundSpaces is the “first-of-its-kind” to incorporate audio perception in addition to visual perception into an AI agent’s detection process.

Though the AI is being studied in simulations now, Changan Chen, a graduate student at UT Austin and a visiting researcher for FAIR, said the program has the potential to one day help visually or hearing-impaired individuals better detect their surroundings and assist in the case of an emergency.

“Currently, it’s aimed for AI-assistant robot at home,” he said in an interview, “and secondly, it’s for people to better understand the speech, connecting the space with the hearing.”

Grauman said at Monday’s event the change to a more audio-centric model is much needed.

“There is a key thing missing that we’d like to address head on, and that’s what this talk is about,” she said. “These agents have been living in silent environments, and they are deaf.”

Event host Kate Saenko, an associate professor of computer science at BU, said in an interview this type of dynamic research is one step closer to mirroring human intelligence — a key facet of AI’s overall goal.

“They’re using not only the vision modality and not only the depth perception on the agent, but also the visual depth perception,” Saenko said. “This is an active exploration of the agent’s environment.”

Saenko also said SoundSpaces is goal-oriented, processing and interpreting noise for the “purpose of navigation.”

“It’s the first-of-its-kind acoustic simulation platform for audio-visual embodied AI research,” Saenko said. “Basically, think of it as a Roomba but more advanced.”

At the event, questions were posed about what happens when a space becomes audibly complicated, with other noises such as dogs barking or car alarms wailing that could distract the agent from the sound they’re supposed to follow.

And this is where this system gets interesting, Chen said: developing methods to create more complex navigation tasks to help train the agent’s cognitive learning skills.

“What we are proposing is to make the robot have multi-sensory experience in the simulation,” he said.

These audio aspects of the simulation contribute to creating a multi-dimensional world through sound and space that help better perform increasingly difficult tasks while navigating an unknown virtual environment, Grauman said at the event.

“We’re going to have an agent train itself through experience to come up with a policy that tells it how it wants to move, based on what it’s currently seeing, or has seen in the past,” Grauman said, “as well as what it’s currently hearing or has heard in the past.”

Nonetheless, it also has great future potential to make an impact, Chen said.

“Eventually, we want to move on into the real world and enable the rules in the real world to do whatever [the agent] can do in the simulation,” he said. “Maybe a fire alarm going off, and then the robot can basically find it and let you know something’s wrong with both the visual and hearing ability. That will be really cool.”